Xen and Swap

The way Xen works is that the RAM used by a virtual machine is not swappable, so the only swapping that happens is to the swap device used by the virtual machine. I wondered whether I could improve swap performance by using a tmpfs for that swap space. The idea is that as only one out of several virtual machines might be using swap space, a tmpfs storage could cache the most recently used data and result in the virtual machine which is swapping heavily taking less time to complete the memory-hungry job.

I decided to test this on Debian/Etch (both DomU and Dom0).

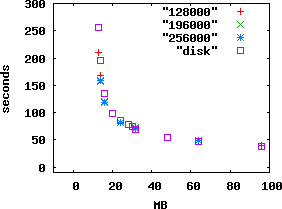

Above is a graph of the time taken in seconds (on the Y axis) to complete the command “apt-get -y install psmisc openssh-client openssh-server linux-modules-2.6.18-5-xen-686 file less binutils strace ltrace bzip2 make m4 gcc g++“, while the X axis has the amount of RAM assigned to the DomU in megabytes.

The four graphs are for using a real disk (in this case an LVM logical volume) and for using tmpfs with different amounts of RAM backing it. The numbers 128000, 196000, and 256000 and the numbers of kilobytes of RAM assigned to the Dom0 (which manages the tmpfs). As you can see it’s only below about 20M of RAM that tmpfs provides a benefit. I don’t know why it didn’t provide a benefit with larger amounts of RAM, below 48M the amount of time taken started increasing exponentially and I expected that there was the potential for a significant performance boost.

After finding that the benefits for a single active DomU were not that great I did some tests with three DomU’s running the same APT command. With 16M of RAM and swap on the hard drive it took an average of 408 seconds, but with swap on the tmpfs it took an average of 373 seconds – an improvement of 8.5%. With 32M of RAM the times were 225 and 221 seconds – a 1.8% improvement.

Incidentally to make the DomU boot successfully with less than 30M of RAM I had to use “MODULES=dep” in /etc/initramfs-tools/initramfs.conf. To get it to boot with less than 14M I had to manually hack the initramfs to remove LVM support (I create my initramfs in the Dom0 so it gets drivers that aren’t needed in the DomU). I was unable to get a DomU with 12M of RAM to boot with any reasonable amount of effort (I expect that compiling the kernel without an initramfs would have worked but couldn’t be bothered).

Future tests will have to be on another machine as the machine used for these tests caught on fire – this is really annoying, if someone suggests an extra test I can’t run it.

To plot the data I put the following in a file named “command” and then ran “gnuplot command“:

unset autoscale x

set autoscale xmax

unset autoscale y

set autoscale ymax

set xlabel “MB”

set ylabel “seconds”

plot “128000”

replot “196000”

replot “256000”

set terminal png

set output “xen-cache.png”

replot “disk”

In future when doing such tests I will use “time -p ” (for POSIX format) which means that it displays a count of seconds rather than minutes and seconds (and saves me from running sed and bc to fix things up).

I am idly considering writing a program to exercise virtual memory for the purpose of benchmarking swap on virtual machines.

My raw data is below:

time apt-get -y install psmisc openssh-client openssh-server linux-modules-2.6.18-5-xen-686 file less binutils strace ltrace bzip2 make m4 gcc g++

96M: 0m37.416s

64M: 0m47.607s

48M: 0m55.050s

32M: 1m8.200s

30M: 1m15.010s

28M: OOM process 2 with default initrd, needed MODULES=dep to make it boot

28M: 1m18.694s

24M: 1m25.517s

20M: 1m38.376s

16M: 2m15.594s

14M: 3m14.777s

13M: OOM when the initrd is not hacked to remove LVM stuff and drivers for Dom0 stuff

13M: 4m15.930s

12M: really won’t boot

128000K of RAM in Dom0 for caching (18M cache and 70M free), 256M swap per DomU on tmpfs.

96M: 0m40.121s

64M: 0m48.207s

32M: 1m12.394s

24M: 1m21.929s

16M: 1m59.042s

14M: 2m48.517s

13M: 3m29.391s

196000K of RAM in Dom0 for caching:

64M: 0m47.871s

32M: 1m12.078s

24M: 1m22.360s

16M: 2m0.336s

14M: 2m36.459s

256000K of RAM in Dom0 for caching:

64M: 0m47.949s

32M: 1m11.435s

24M: 1m21.402s

16M: 1m58.884s

14M: 2m39.081s

256000: of RAM in Dom0, three DomU’s:

32M: 3m41.583s 3m40.737s 3m41.071s

16M: 6m16.430s 6m14.832s 6m7.569s

hard drive, three DomU’s:

32M: 3m42.100s 3m47.516s 3m46.333s

16M: 6m41.587s 6m50.837s 6m51.795s

3 thoughts on “Xen and Swap”

Comments are closed.

> I am idly considering writing a program to exercise virtual memory for the purpose of benchmarking swap on virtual machines.

I wrote such a thing (http://devin.com/lookbusy/) a ways back, as part of a somewhat silly app to make fake system load of various sorts. Might be useful to you, might not.

When I read the first paragraph here, it sounded like the silver bullet I’ve been looking for: a way to allow a DomU to use more RAM than it should for brief periods. The big balancing act of administering Dom0’s (at least in my experience), comes from having a large number (20+) of DomU’s on a single Dom0. Here the goal is to have each domu have as little RAM allocated to it as possible, but to allow a way for it to temporarily grow without a reboot. Or, more specifically, to have just enough RAM to handle day-to-day operations, but to allow for more during special operations.

I’d suspect that the numbers you’re seeing have something to do with the way the swapping functionality in the kernel is implemented, though I really am not quite familiar enough with the kernel internals to make that judgement.

If you do throw together any Xen testing tools, I’ll definitely make use of them. I’m (un-)fortunate enough to get paid to work with Xen all day, and if I can get so much as a 1% improvement that can be quite important.

Devin: Interesting program, however it appears to have no support for rate limiting of memory access. I want to be able to allocate X amount of memory and then access Y amount per second with Z amount of that access being writes.

Alex: When I had the idea I thought it would be a silver bullet, but the evidence suggests that isn’t the case. I look forward to someone telling me about another way to do it or pointing out a flaw in my testing.